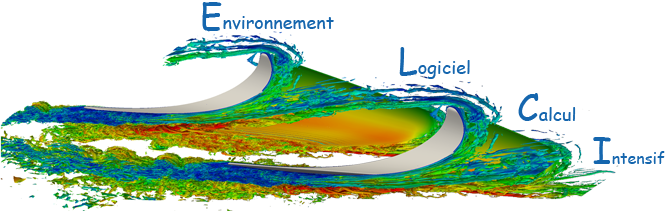

Computer and Computational Sciences at Exascale INRIA Large Scale Initiative

The C2S@Exa INRIA large-scale initiative is concerned with the development of numerical modeling methodologies that fully exploit the processing capabilities of modern massively parallel architectures in the context of a number of selected applications related to important scientific and technological challenges for the quality and the security of life in our society. Avalon is a core-team member, co-leading Pole 4 on Programming models.

Start Date: 2013

Duration: 4 years

Avalon Members: T. Gautier, C. Perez, J. Richard

More information on C2S@Exa website

methodological needs of HPC application and runtime developers and to allow to study real HPC systems both from the correctness and performance point of view. To this end, we gather experts from the HPC, formal verification and performance evaluation community. website :

methodological needs of HPC application and runtime developers and to allow to study real HPC systems both from the correctness and performance point of view. To this end, we gather experts from the HPC, formal verification and performance evaluation community. website :

o propose to revisit the principles of existing schedulers after studying the main factors impacted by job submissions. Then, we will propose novel efficient algorithms for optimizing the schedule for unconventional objectives like energy consumption and to design provable approximation multi-objective optimization algorithms for some relevant combinations of objectives (performance, fairness, energy consumption, etc.). An important characteristic of the project is its right balance between theoretical analysis and practical implementation. The most promising ideas will lead to integration in reference systems such as SLURM and OAR as well as new features in programming standards implementations such as MPI or OpenMP. We expect MOEBUS results to impact further use of very large scale parallel platforms.

o propose to revisit the principles of existing schedulers after studying the main factors impacted by job submissions. Then, we will propose novel efficient algorithms for optimizing the schedule for unconventional objectives like energy consumption and to design provable approximation multi-objective optimization algorithms for some relevant combinations of objectives (performance, fairness, energy consumption, etc.). An important characteristic of the project is its right balance between theoretical analysis and practical implementation. The most promising ideas will lead to integration in reference systems such as SLURM and OAR as well as new features in programming standards implementations such as MPI or OpenMP. We expect MOEBUS results to impact further use of very large scale parallel platforms.

The last decade has brought tremendous changes to the characteristics of large scale distributed computing platforms. Large grids processing terabytes of information a day and the peer-to-peer technology have become common even though understanding how to efficiently such platforms still raises many challenges. As demonstrated by the USS SimGrid project funded by the ANR in 2008, simulation has proved to be a very effective approach for studying such platforms. Although even more challenging, we think the issues raised by petaflop/exaflop computers and emerging cloud infrastructures can be addressed using similar simulation methodology.

The last decade has brought tremendous changes to the characteristics of large scale distributed computing platforms. Large grids processing terabytes of information a day and the peer-to-peer technology have become common even though understanding how to efficiently such platforms still raises many challenges. As demonstrated by the USS SimGrid project funded by the ANR in 2008, simulation has proved to be a very effective approach for studying such platforms. Although even more challenging, we think the issues raised by petaflop/exaflop computers and emerging cloud infrastructures can be addressed using similar simulation methodology. The goal of the SONGS project is to extend the applicability of the SimGrid simulation framework from Grids and Peer-to-Peer systems to Clouds and High Performance Computation systems. Each type of large-scale computing system will be addressed through a set of use cases and lead by researchers recognized as experts in this area.

The goal of the SONGS project is to extend the applicability of the SimGrid simulation framework from Grids and Peer-to-Peer systems to Clouds and High Performance Computation systems. Each type of large-scale computing system will be addressed through a set of use cases and lead by researchers recognized as experts in this area.